25

Data Analysis: More than Two Conditions

• Experiments often have more than 2 conditions

▪ Single-factor (IV) experiment with 3 or more levels

▪ Complex design experiment with 2 or more IVs

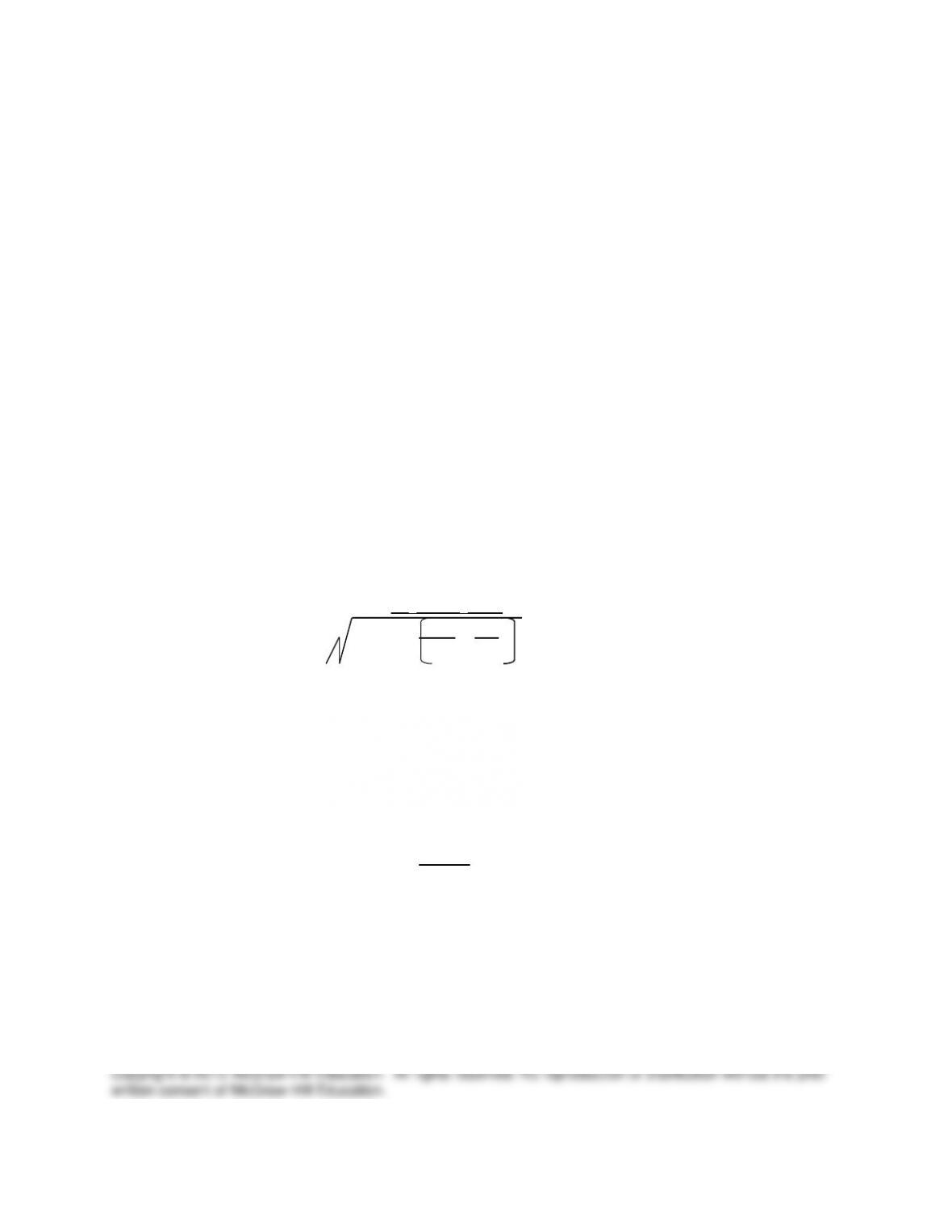

• Analysis of Variance (ANOVA)

▪ Most frequently used statistical procedure for more than 2 conditions

▪ Uses NHST

▪ Identifies whether IV produces statistically significant effect on DV

▪ Logic of ANOVA: Identify sources of variation in the data

o Error variation (“chance”)

o Systematic variation (effect of IV)

▪ Error variation (within-group)

o In a properly conducted random groups design, the only

differences within each group should be error variation alone.

▪ Differences among participants (individual differences)

▪ Hold conditions constant to reduce error variation.

▪ Systematic variation (between-group)

o Second source of variation is between groups–the effect of the

different IV conditions

o If H0 is true (no effect of IV – no difference between groups), any

observed difference among groups is due to error variation alone.

o If H0 is false (IV has effect)

▪ Means for experimental conditions should differ

▪ Differences should be systematic (due to IV)

▪ Differences among group means are due to effect of IV

(systematic variation) plus error variation.